Completely locked-in man uses brain-computer interface to communicate

Share this article

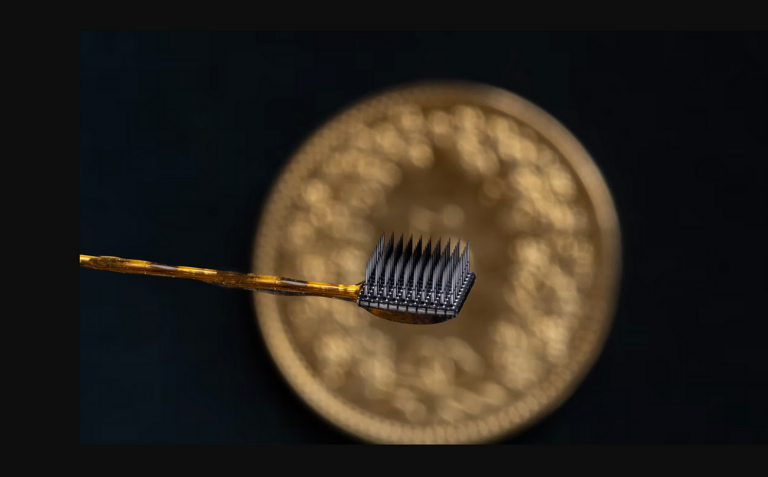

Researchers at the Wyss Center Geneva created a device and a computer program to decode brain signals

Completely paralyzed and unable to speak, a 30-year-old man was able to speak with its caregivers and his family. He even managed to ask his 4-year-old son : “Do you want to watch Disney’s Robin Hood with me.” Researchers at the Wyss Center for Bio and Neuroengineering in Geneva have enabled this man to communicate again, thanks to two 64 microelectrode arrays implanted in his brain and a computer interface. The clinical case study has been underway for more than two years with the participant, who suffers from advanced amyotrophic lateral sclerosis (ALS) – a progressive neurodegenerative disease in which people lose the ability to move and speak.

Locked-in patient can hear and interact

This study answers a long-standing question about whether people with full locked-in syndrome – that is, who have lost even eye movement – also lose their brain’s ability to generate commands to communicate,” said Jonas Zimmermann, senior neuroscientist at the Wyss Center in Geneva and co-author of the study.

The results show that communication is possible. Consciousness and cognitive function are not affected. The patient could volitionally select letters to form words and phrases to express his desires and experiences using a neurally-based auditory neurofeedback system independent of his vision.

The study participant lives at home with his family and has learned to generate brain activity by attempting different movements. These brain signals are picked up by the implanted microelectrodes and are decoded in real-time by a machine learning model. The model interprets the signals as meaning “yes” or “no”. To reveal what the participant wants to communicate, a spelling program reads the letters of the alphabet aloud. Using auditory neurofeedback, the participant can choose “yes” or “no” to confirm or reject the letter, and ultimately form words and whole sentences.

Human-machine interaction

The Wyss Center researchers are now trying to refine their technology. They are working on how to enable speech decoding directly from the brain during imagined speech, which could lead to more natural communication.

American entrepreneur Elon Musk, with his company Neuralink, is also working on a similar project. The first tests of his brain implant on human volunteers could begin this year.

The number of people with ALS is increasing and more than 300 000 people are projected to be living with the disease by 2040, with many of them reaching a state where speech is no longer possible.

Read the full study in Nature Communication